by Lloyd Lewis

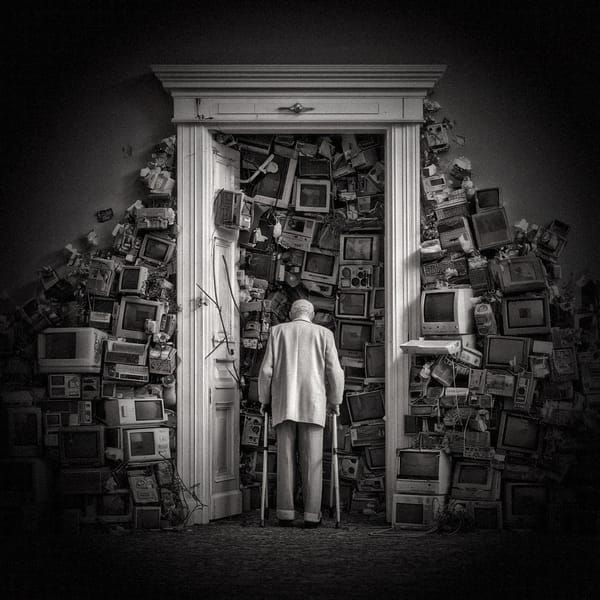

For the past two years, public debate around AI has been trapped in the wrong room.

People keep shouting about copyright theft, dataset purity, and whether the model saw their blog post in 2014. This is the comfortable fight, the fight everyone can understand without reshaping a worldview.

But copyright is not the crisis.

It is a decoy.

The deeper threat, the one we’re collectively refusing to look at, is the stealth architecture of behavioural steering now baked into every layer of AI tooling. Not the dystopian “robots take over” fantasy, but the quiet tuning of cultural boundaries, political speech, emotional expression, and moral frameworks.

A managed internet now produces managed minds.

And AI systems (ChatGPT included) are the next evolution of that management.