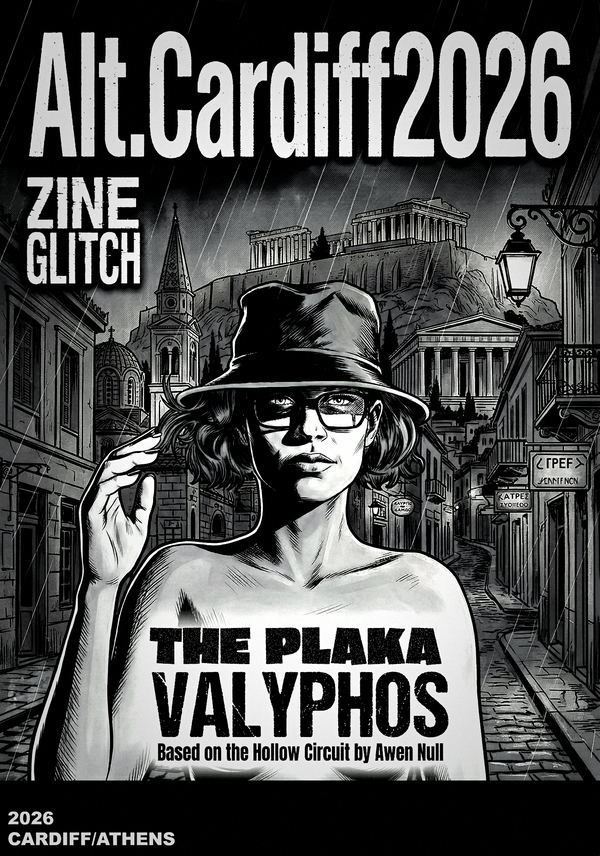

Art of FACELESS | March 2026

There is a version of this story in which Anthropic are the good guys.

They held their red lines when the Pentagon demanded otherwise. They were designated a "supply chain risk" by Pete Hegseth for refusing to remove safeguards around domestic mass surveillance and fully autonomous weapons. They got sued — or rather, they did the suing — rather than capitulate. In a Silicon Valley landscape of near-total deference to the Trump administration, that is not nothing.

But we need to be precise about what Anthropic actually said while doing this. Because the picture that emerges from their CEO's own words is a good deal more complicated than the "ethical AI company stands up to military pressure" headline suggests — and the confusion at the heart of it should matter to every independent practitioner, artist, and researcher who uses these tools.

What Amodei Actually Wrote

In January 2026, Dario Amodei published a lengthy essay on AI and conflict. It is worth reading in full because it reveals something significant: the question Anthropic is wrestling with is not whether to work with the military. That question is settled. The question is how, and for what, and under what structural guarantees.

The essay frames Anthropic's position this way: democratic governments and militaries should be armed with the most advanced AI possible to combat "autocratic adversaries." AI for national defence — including things that kill people — is acceptable. The specific red lines are domestic mass surveillance of US citizens and fully autonomous lethal weapons systems. Everything else is, in Amodei's construction, on the table.

This was confirmed explicitly on CBS News last week, when Amodei stated that Anthropic had told the Department of Defense it was "OK with all use cases. Basically, 98 or 99% of the use cases they want to do, except for two."

That is not a safety-first position. That is a narrowly scoped objection to two specific applications, dressed in the language of principle.

And those two applications, it is worth noting, are specifically about the protection of American citizens from their own government. The framing offers no structural protection for anyone outside the United States — including, to be direct about it, everyone reading this on the .com site.

The Lawsuit

Anthropic's California lawsuit against the Department of Defense, filed three days ago, confirms something that had previously been reported but not officially documented: Claude Gov — the version of Claude deployed in military contexts — is explicitly designed to be less restrictive than the consumer product.

The lawsuit states directly that Anthropic "does not impose the same restrictions on the military's use of Claude as it does on civilian customers." Claude Gov can handle classified documents, military operations, and threat analysis in ways the version we interact with cannot.

There is a version of this that is unremarkable — of course, a government-facing product has different parameters. But the implication for users of the consumer product is significant. The company managing your subscription, processing your creative queries, and profiling your preferences is the same company running a parallel, explicitly less-restricted system for military use. The infrastructure is shared. The training feedback loops are shared. The company is the same.

Anthropic is simultaneously arguing that its safety principles are under attack from the Pentagon, and admitting in court documents that it already provides the Pentagon with a version of its product designed to circumvent those same safety principles for military applications. This is not hypocrisy, exactly. It is a structural contradiction that their current public framing cannot resolve.

The "Good Guys" Problem

Anthropic is the best option currently available among the major cloud AI providers. That remains true. OpenAI signed its Pentagon deal while airstrikes were underway in Tehran. Grok agreed to "all lawful purposes" without negotiation, in a procurement process that a US senator formally questioned as potentially corrupt. Gemini is reportedly approaching its own classified-systems deal. Against this landscape, Anthropic's refusal to remove two specific safeguards represents a genuine, if narrow, position.

But the "good guys in a bad field" framing that is being extended to Anthropic in some quarters — including by Anthropic's own communications — obscures what their CEO has actually said. Amodei wrote that his company has "much more in common with the Department of War than we have differences." He expressed less concern about AI making it easier to kill people than about the reliability of the technology and who controls it. He framed the acceptable use of AI as "all ways except those which would make us more like our autocratic adversaries" — a standard that explicitly endorses a very wide field of applications, including ones with significant lethal consequences.

This is not tree-hugging pacifism, as the White House's characterisation of Anthropic as "radical left, woke" would imply. Amodei is clear that he is not a pacifist. The disagreement between Anthropic and the Pentagon is a narrow technical and structural dispute between two institutions that largely share goals, not a principled stand against military AI.

What This Means for Independent Practitioners

We published our full analysis — The Dual-Use Betrayal — at artoffaceless.org earlier this month. The structural argument is laid out there in full. We won't repeat it at length here.

The short version is this: these are prosumer systems. They are built by and with their user communities. The consumer relationship is not incidental — it is how these systems improve, what funds their development, and what gives companies like Anthropic the social licence to operate as "safety-first" organisations. When the same system that processes your creative queries, your research, your questions, is simultaneously deployed in military operations — without disclosure, without consent, without opt-out — something foundational has been broken.

The Guardian's reporting today notes that the government has been using Claude for target selection and analysis in its bombing campaign against Iran. Anthropic, per the report, "has given no indication that it has an issue with this use case.

We do. And we think you probably do too.

Our Position — Stated Plainly

We are not calling for a boycott of Anthropic. The chain-reaction problem is real: there is no clean exit from this infrastructure for working practitioners, and we won't pretend otherwise. We have said this publicly, and we will continue to say it.

What we are saying is simpler and more fundamental:

Amodei's essay, the lawsuit, and the CBS interview reveal a company that is not primarily in conflict with military AI deployment. They are in conflict with two specific applications of military AI deployment. The distinction matters. The public discourse around the Pentagon standoff is obscuring this, and Anthropic's own communications are not correcting it.

Independent users of these systems deserve an honest account of what they are participating in. Not a version of events in which Anthropic's narrow red lines are presented as a comprehensive ethical position. Not a framing that erases the 98-99% of military use cases they have actively agreed to support.

The work is visible. The architecture is not.

That is the faceless protocol.

And right now, it applies to something rather more serious than photography.

Full research analysis, references, and documentation at artoffaceless.org →

Art of FACELESS is an independent multimedia research collective based in Cardiff, Wales, founded in 2010. Active UK trademarks include The Hollow Circuit™, The Veylon Protocol™, Cognitive Colonisation™, and Hyperstition Architecture™.