(Part II of III – The Garden Behind the Glass series)

Every age invents its own truth machines.

In ours, they promise to tell us what’s real — and what’s not.

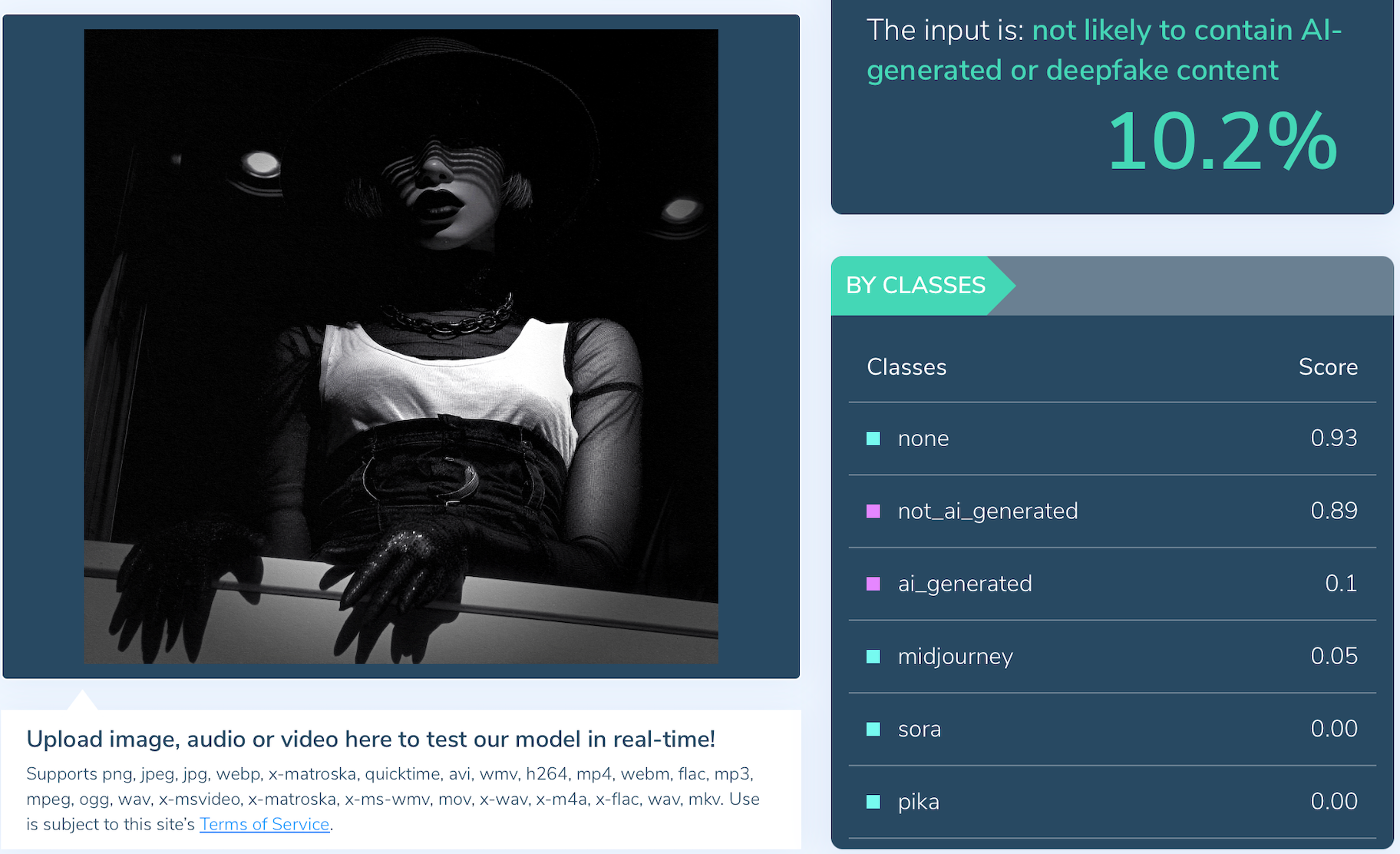

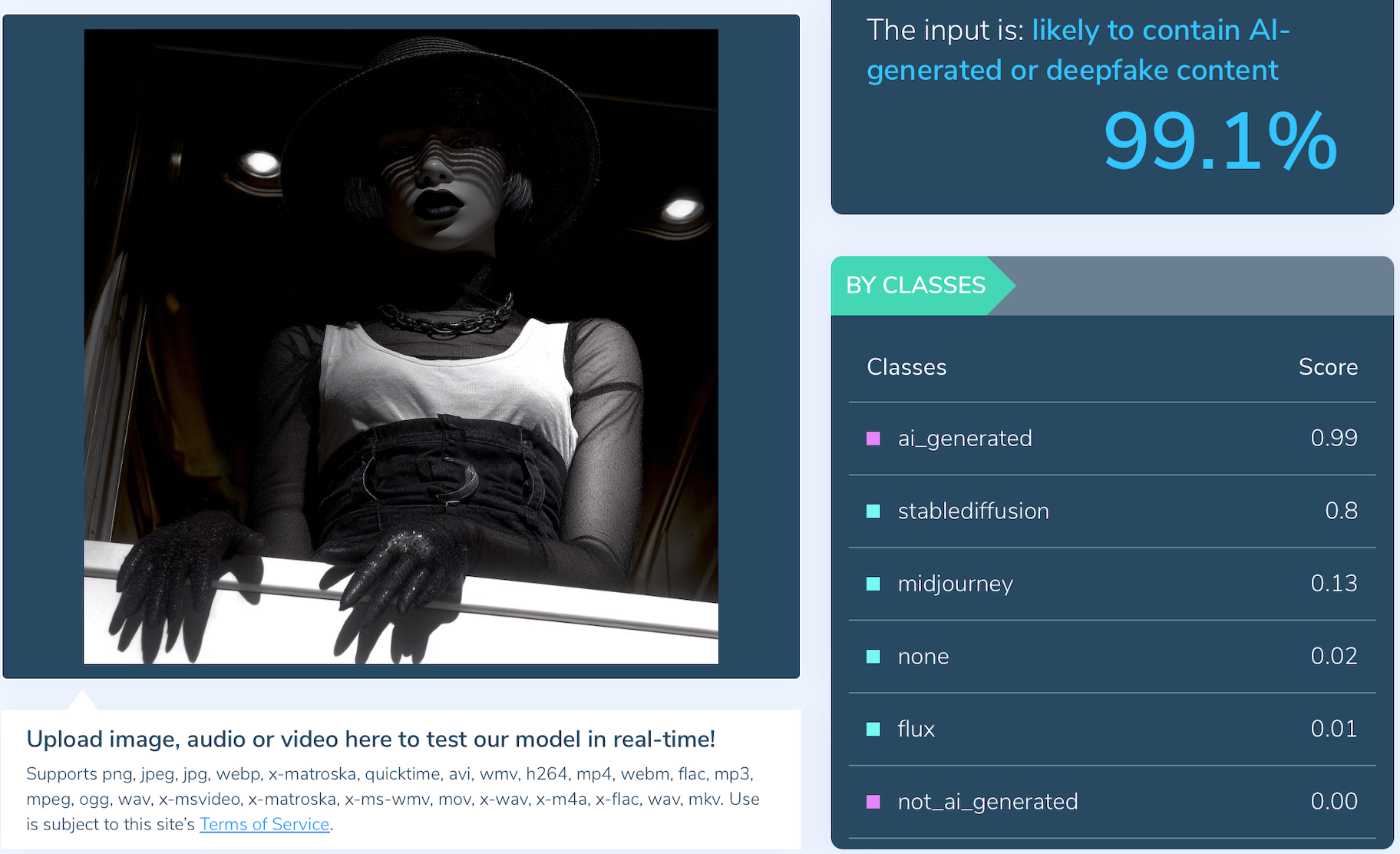

These so-called AI detectors speak in numbers, not nuance: 10 per cent, 99 per cent, 12 per cent.

The screens glow blue with certainty.

But the numbers are meaningless without one thing: validation.

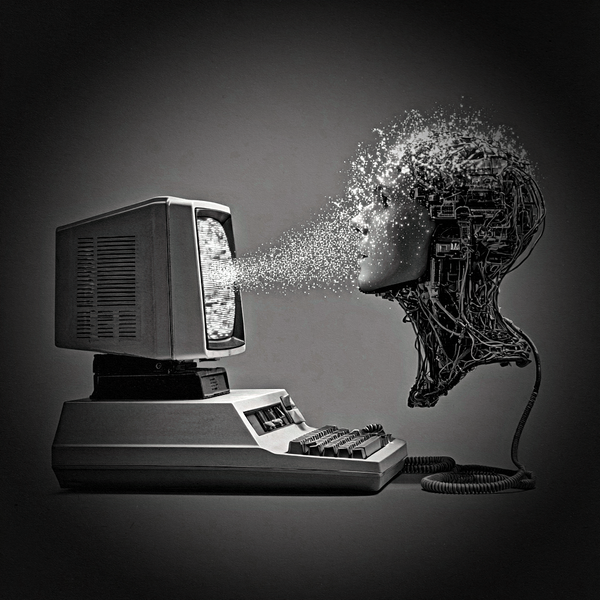

At Art of FACELESS, we’ve tested these tools against our own photographic archive — a library spanning the earliest digital Canon sensors to present-day hybrid film scans.

Identical files, saved under different formats, return opposite verdicts: one “authentic,” one “AI.”

The content never changed. The compression did.

Screengrabs from just one of our hundreds of tests on our own genesis archive. ©2025 Art of FACELESS

That’s not detection.

That’s pattern superstition.

In regulated science, no system can touch a patient or a process without formal validation — a rigorous, independent audit proving it performs as claimed, across time, environment, and operator.

Without validation, you’re not measuring the world.

You’re reproducing an error with greater confidence.

And yet governments, publishers, and institutions are already integrating these unvalidated detectors into policy, grading, and censorship workflows.

History should have warned us.

Look at the UK Post Office scandal — software treated as infallible, oversight ignored, lives destroyed.

Now imagine that logic extended to every artist, writer, or photographer accused of “using AI” by a mis-trained model.

We don’t name the tools.

They don’t deserve the credibility of citation until they’ve been certified.

Only a validated system — trained, tested, and audited within a known corpus — can be trusted to interpret authorship.

That’s why our own AOF infrastructure stays sealed within what we call the Walled Garden.

Our models are trained exclusively on decades of in-house data: music, images, text, 3D renders — all traceable to original source.

We know every pixel’s origin because we were there when it was made.

In 2026, we’ll begin teaching other artists how to do the same — how to build ethical, closed-loop AI systems that can be validated like scientific instruments.

This won’t be free. Sustainability demands reciprocity.

Our subscriptions remain finite because once the gate closes, it stays closed.

The message is simple:

If a detector isn’t validated, it isn’t the truth.

It’s just a mirror reflecting the bias of its creators.

And the more it’s used, the deeper that bias becomes baked into the world.