Art of FACELESS | artoffaceless.com | February 2026

We have withdrawn our image portfolios from Threads and Instagram, some from Tumblr, Mastodon, and Bluesky too (that were linked to Pinterest specifically). This is not a temporary measure. It is a considered response to a systemic failure in how social platforms are currently classifying creative work — and a refusal to have 14 years of documented creative practice misrepresented by automated systems that cannot distinguish between generated content and skilled digital craft.

What Is Actually Happening

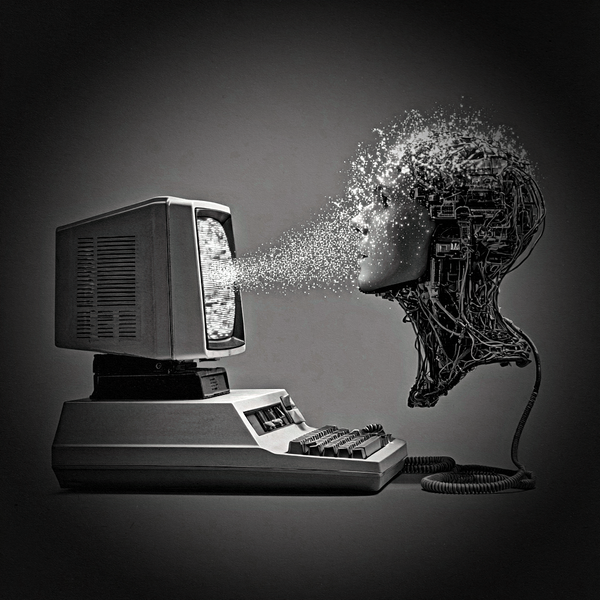

Pinterest triggered this statement. Their platform is currently applying automated "AI Modified" labels to images produced entirely in Adobe Photoshop — software with a 30-year history as the industry standard for legitimate digital craft. If you have used any Adobe Creative Suite tool in your workflow, Pinterest's classifier is flagging your work as synthetic. Not sometimes. Systematically.

Meta's Threads compounded this with a platform culture of hostility toward anything algorithmically adjacent to AI — a witch-hunt atmosphere where creators are presumed guilty and abuse from other users functions as an informal enforcement mechanism. Instagram follows the same labelling logic under the same ownership.

These platforms are now applying "AI Modified" or "Made with AI" labels to images using classifier systems trained primarily to detect generative AI output. These systems are failing at an estimated 50% false positive rate across the independent creative community — a figure consistent with our own testing.

The images being flagged in our case are:

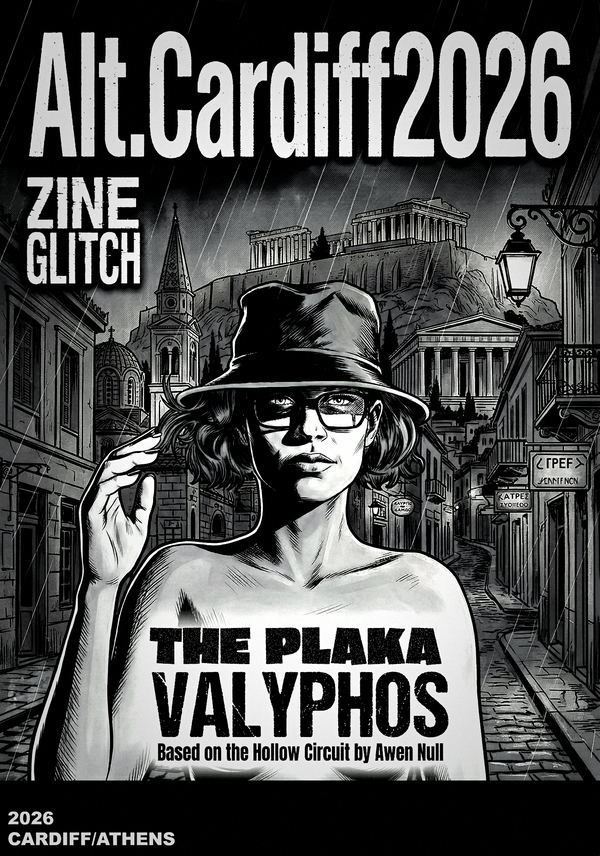

- 3D renders built in Reallusion Character Creator and iClone — software requiring years of technical skill, manual rigging, scene construction, and artistic direction

- Photoshop composites constructed from our own photography using layer-based workflows we have employed for over two decades

- Post-processed renders where AI functions as one specific tool in a documented pipeline — stylisation, not generation

None of this is "AI-generated" in the sense these labels are intended to convey. The work exists in documented form, with timestamped workflow stages, provenance trails, and in several cases active UK trademark protection.

The classifiers do not care. They are flagging the aesthetic — high-contrast tones, clean renders, deliberate stylisation — as synthetic, because these characteristics overlap with generative AI output. The result is that technically accomplished, human-directed work is being penalised for looking too good.

The Deeper Problem

This is not simply a technical error to be corrected through appeals or metadata scrubbing.

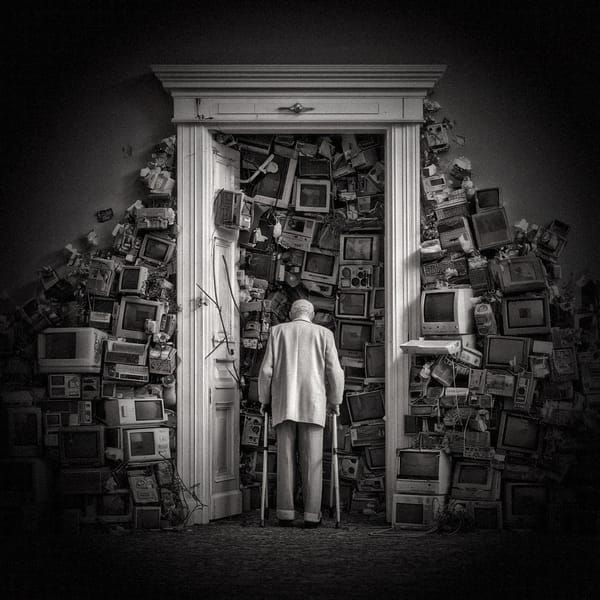

The emerging requirement for digital artists to "dirty up" their work — to introduce artificial noise, strip provenance data, degrade their exports — in order to pass automated authenticity checks is an inversion of everything these systems claim to protect. It demands that skilled craft disguise itself as imperfect to be legible as human.

It also creates a structural penalty for disabled artists who use AI-assisted tools as accessibility accommodations. Current classifiers make no distinction between generative AI replacing human creative decisions and assistive AI enabling a human to execute them. The EU AI Act's provisions on human oversight have not translated into platform implementation. The automated bot does not read the law.

We have spent years developing transparent, documented methodologies specifically to demonstrate human creative authorship in AI-assisted workflows — the kind of rigorous provenance documentation that should make classification straightforward. It doesn't matter. The bot flags the aesthetic anyway.

Our Position

We are not anti-platform. We are anti-complicity in misrepresentation.

We will not invest creative resources into platforms where our work will be systematically mislabelled, where appeals processes are slow and opaque, and where the burden of proof falls entirely on the creator. We will not normalise the practice of degrading our files to pass an automated test.

Our image work will remain accessible through our own sites while we reevaluate.

What We Are Calling For

This is not a problem Art of FACELESS faces alone. 3D artists, photographers, composite artists, illustrators, and disabled creatives using assistive tools are being caught in the same net.

Platform operators need to:

- Implement human review pathways that are accessible and timely — not 30-day appeals queues

- Distinguish algorithmically between generative AI and AI-assisted tools in documented human-directed workflows

- Acknowledge the false positive rate and publish transparency data on classifier accuracy

- Build exemption frameworks for documented professional practice and accessibility use cases

We will be documenting cases of misclassification as part of the Chronicles of The Hollow Circuit archive, alongside the broader pattern of platform algorithmic overreach we have been tracking since 2012.

The irony is not lost on us. The systems built to defend human creativity are currently its most active suppressors.

Art of FACELESS is an independent creative research collective established in Cardiff, Wales in 2010. Active trademark holder: The Hollow Circuit™, The Veylon Protocol™, Cognitive Colonisation™, Hyperstition Architecture™.