The UK’s Online Safety Act, Substack’s compliance, and why this matters for legal creators and independent publishers

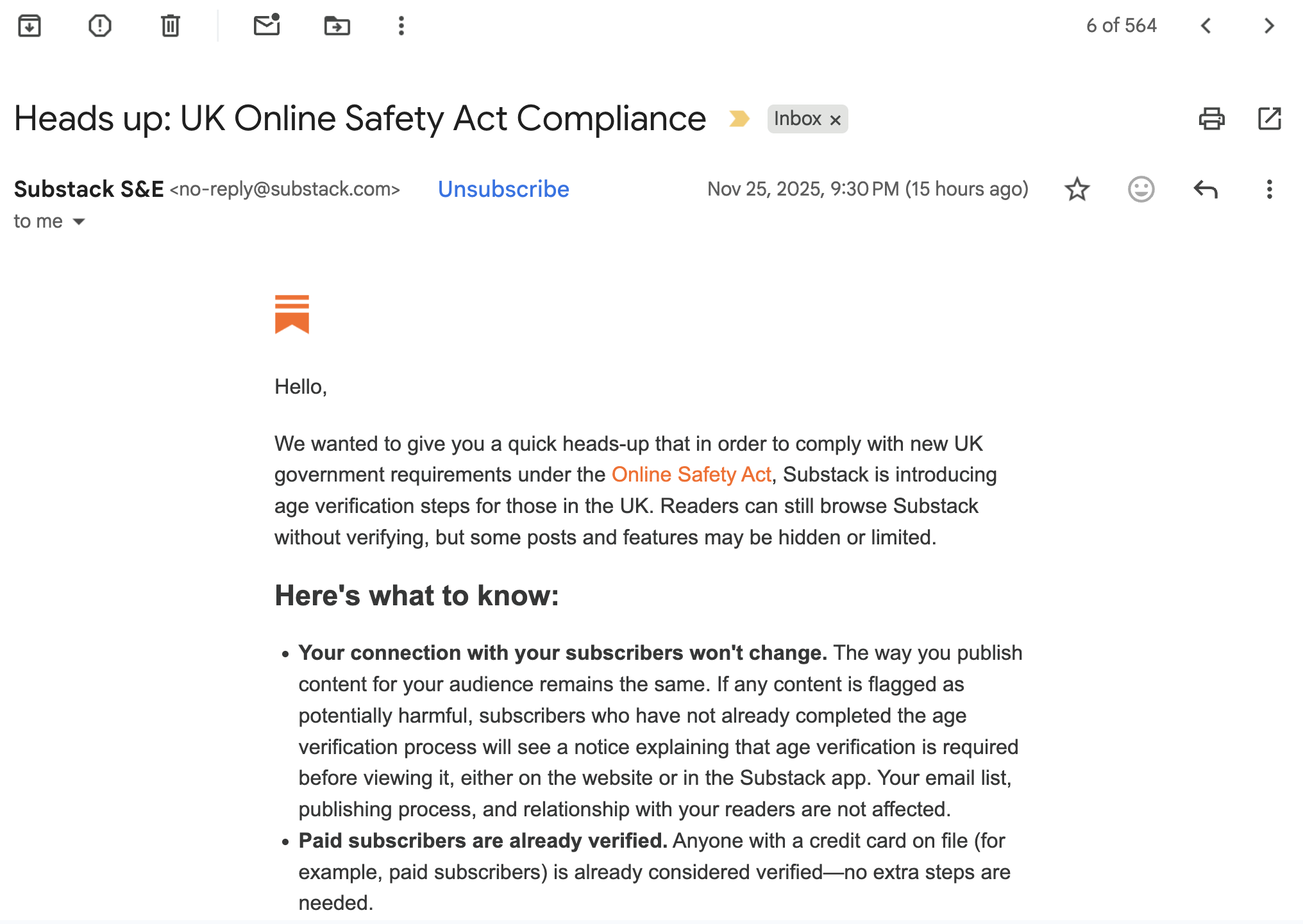

Another friendly, upbeat, corporate email has landed in the inbox.

Another platform is introducing age-verification gates in order to comply with the UK Online Safety Act (OSA).

Here’s our position — clearly, calmly, and without ambiguity.

We fully support the prevention of illegal content.

Let’s be absolute at the start:

- We do NOT support illegal material.

- We do NOT defend platforms that allow it.

- We do NOT oppose age verification in contexts where it protects children from demonstrable harm.

There is no grey area on that.

The issue is not the existence of safety measures.

The issue is the scope of the measures — and the collateral damage already being done to legitimate, legal creators.

The OSA is written so broadly that entire categories of lawful artistic expression are now treated as potentially harmful by default. This is what we are raising the alarm about.

WHAT’S BEING CAUGHT IN THE NET?

AOF evidence table / for clarity / 2025 and moving into 2026

The following categories are legal, common, and historically protected forms of expression — but are now routinely flagged, age-gated, or suppressed by large platforms trying to comply with “harm” definitions so vague they border on unusable.

1. Lived-experience: writing about disability, illness or trauma

Often flagged for “harmful themes” despite being educational, supportive, or artistic.

Examples:

- memoirs of chronic illness

- accounts of eating disorder recovery

- bipolar disorder narratives

- depictions of disability discrimination

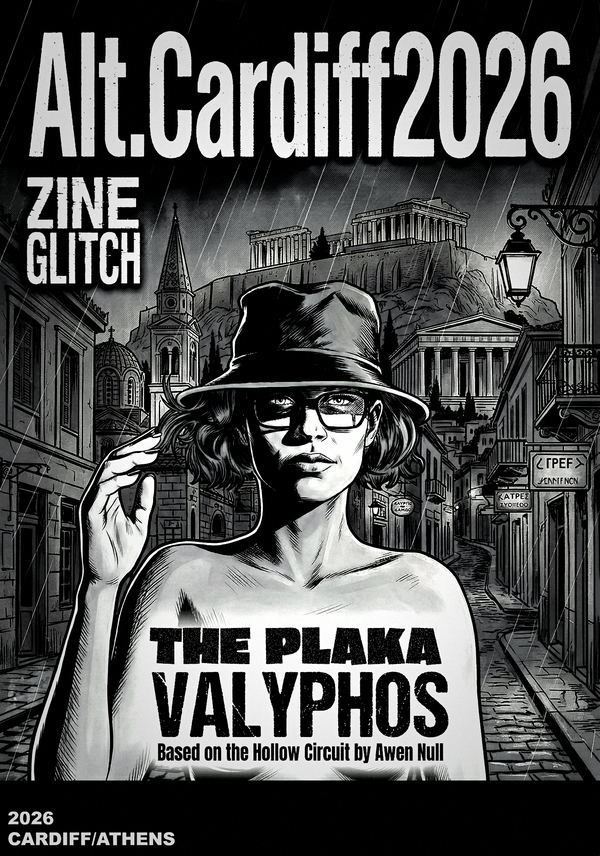

2. Fiction containing adult themes written for adults

Not pornography, not illegal — just adult storytelling.

Examples:

- literary fiction with sexuality

- sci-fi or fantasy with violence or mature themes

- queer narratives

- dark psychological or political fiction

3. Political commentary, satire, and civil critique

A rapidly expanding catch-all category because platforms fear liability.

Examples:

- criticism of government policy

- analysis of censorship

- social commentary on policing, healthcare, or welfare

- satire of political figures

4. Multimedia or experimental art that isn’t “family friendly”

This category is almost impossibly broad.

Examples:

- glitch art

- body-positive photography

- performance art with mature themes

- experimental hybrid works (VN, prose, sound design)

5. LGBTQ+ content

Historically and repeatedly mis-flagged by automated systems.

Examples:

- non-sexual queer representation

- LGBTQ+ history

- coming-out essays

6. Sex-education, harm-reduction or factual discussion of adult sexuality

Lawful, educational material routinely age-gated because platforms cannot differentiate.

Examples:

- consent education

- feminist writing on bodily autonomy

- sex-positivity

- research-based sexual health information

7. Artistic nudity

Perfectly legal, culturally normal, centuries-old.

Examples:

- fine art, life drawing, conceptual photography

- historical discussion of art

- artistic self-portraits with non-sexual nudity

8. Survivors’ stories

Flagged because algorithms misinterpret harm described as harm promoted.

Examples:

- recovery from abuse

- addiction journeys

- witnessing violence

- anti-war testimony

The point is simple:

Under broad, catch-all frameworks, legal work about the human condition is treated as if it were dangerous, despite posing no illegal risk whatsoever.

THE CORE ISSUE: BLANKET SYSTEMS, NOT SAFETY

We oppose blanket, indiscriminate systems that cannot distinguish between illegal content and legal adult expression.

We oppose safety frameworks that force creators to self-censor lawful, vital, culturally important work.

We oppose policy design that treats nuance as a threat.

This is not rebellion. It is proportional critique.

We are not arguing that platforms should host illegal material.

We are arguing that legal expression must not be strangled by legal overreach.

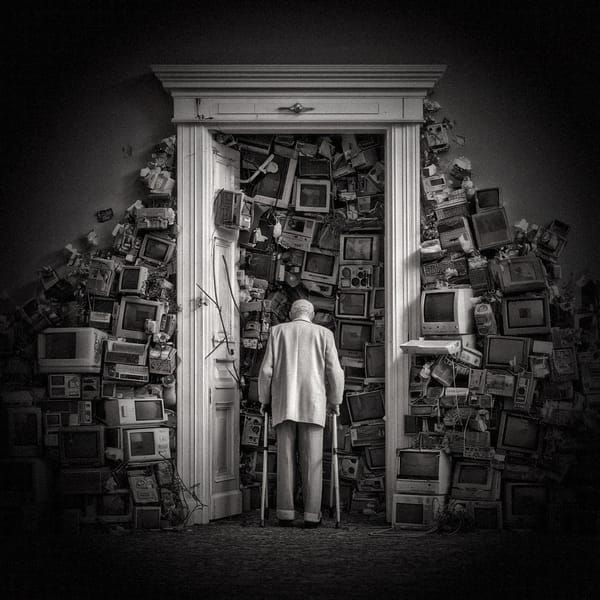

WHY THIS MATTERS FOR INDEPENDENT ARTISTS

Creators like us — working in fiction, photography, multimedia, and social commentary — are routinely:

- throttled

- hidden

- age-gated

- demonetised

- algorithmically buried

- flagged inaccurately

- forced to migrate from one platform to another

Not because the work is illegal.

Because the regulatory mesh is too coarse, and the simplest path for platforms is to restrict everything that might possibly incur liability.

THE AOF PHILOSOPHY

- We comply with the law.

- We oppose badly designed laws that punish legal art.

- We move readers to platforms with proportionate adult verification (e.g. Patreon) where necessary.

- We maintain our own sites to prevent over-compliance from erasing lawful material.

- We use offline publishing as a parallel safeguard because print cannot be algorithmically censored.

This is not about non-compliance.

This is about avoiding cultural sterilisation.

This is about refusing to have legal, adult creative work treated as suspect.

This is about protecting nuance in a system designed to erase it.

References:

Screenshots of the email received from Substack: