Public Position Statement

AI Detection, Creative Misclassification, and the Surveillance of Authorship

March 2026

The Instrument Is Wrong

Tools claiming to detect AI-generated content are now being deployed in academic institutions, publishing, games, music, visual art, and commercial commissioning. One leading product — Originality.ai — markets itself as the "Most Accurate AI Detector" while its own output disclaimer reads:

"100% Confident That's AI — NOT to be interpreted as 100% of the text produced is AI-generated."

This is not a technical nuance. It is an admission that the tool cannot quantify what it claims to detect, issued in the same breath as a declaration of total confidence. In any validated analytical context — pharmaceutical assay, forensic testing, medical diagnostics — a tool exhibiting this contradiction would not pass regulatory scrutiny. It would not be used in consequential decision-making. It would not be used at all.

Yet these tools are now being used to determine whether a student cheated. Whether a writer is who they say they are. Whether sixteen years of documented creative practice is authentic.

What These Tools Actually Measure

AI detection tools do not identify AI. They measure statistical proximity to patterns associated with AI-generated output — low perplexity, syntactic consistency, and lexical density within a given register. These are also the measurable characteristics of skilled, disciplined, extensively practised human writing.

The implicit baseline is mediocre human output. Write better than average, and you register as suspicious. The tool is, in structural terms, a competence tax.

This is not a fringe concern. Academic research has demonstrated that these tools disproportionately flag non-native English speakers, neurodivergent writers whose prose is unusually structured, and expert practitioners across technical and creative disciplines. The false positive rate is not a bug. It is an architectural consequence of what the tools were built to do.

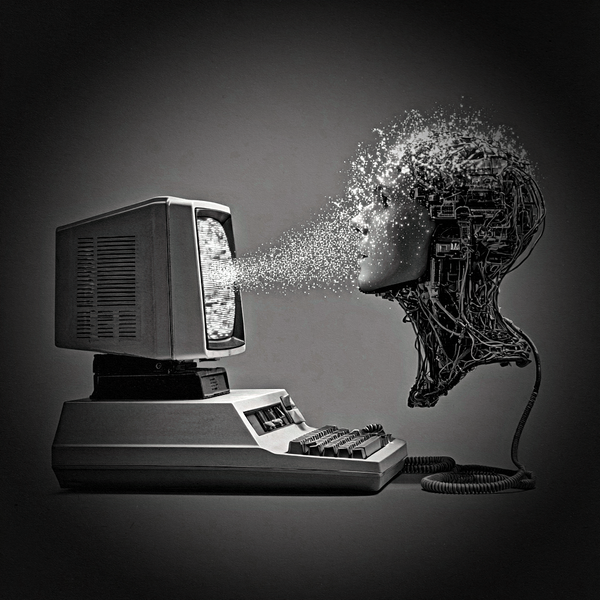

AI Is Already Everywhere. The Line Was Never Clean.

The current moral panic treats AI involvement as a binary — present or absent, contaminated or pure. This framing collapses immediately on contact with how creative production actually works.

Hardware synthesisers sold to professional music studios ship with AI-driven preset systems as standard. Popular video games use AI-generated assets as placeholders throughout production pipelines. Photoshop, Premiere, DaVinci Resolve — tools used by working professionals every day — have integrated generative AI features that activate automatically. The co-pilot is already in the cockpit. It has been for years.

This does not resolve questions of attribution, transparency, or labour rights around training data — those are legitimate and unresolved. But it means the question "did this involve AI?" is increasingly unanswerable in a meaningful way, and the attempt to answer it through automated classification of finished output is methodologically incoherent.

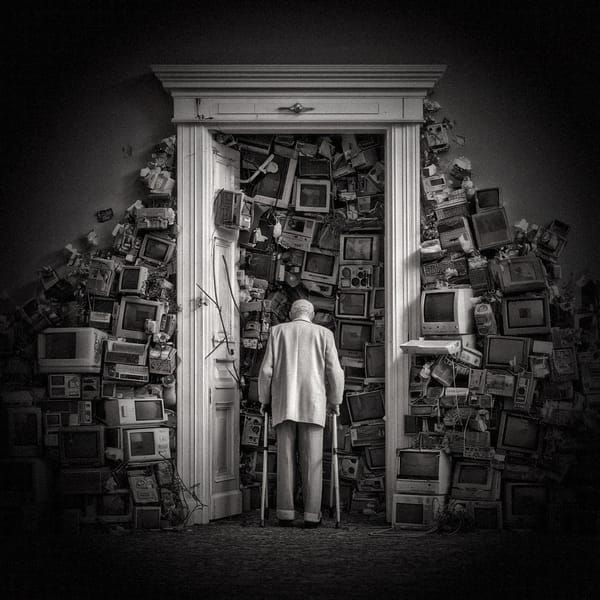

The Real Threat Is Surveillance, Not Generation

The generative AI discourse has been almost entirely focused on the wrong end of the pipeline.

The question of whether AI can make art is philosophically interesting and economically disruptive. The question of whether AI systems can be used to surveil, classify, and delegitimise artists and creative workers is an active civil liberties concern.

When an unvalidated commercial tool's output can result in academic penalty, lost commissions, platform bans, cancelled contracts, or public accusations of fraud — that tool is functioning as an instrument of control. The accuracy of its classification is secondary to the power of its verdict. This is the architecture of surveillance: not that it is always right, but that challenging it is costly, slow, and structurally disadvantaged.

Creatives who are prolific, technically sophisticated, and stylistically consistent — the very qualities that define serious long-term practice — are most exposed to misclassification. The system punishes excellence and rewards mediocrity as the acceptable human baseline.

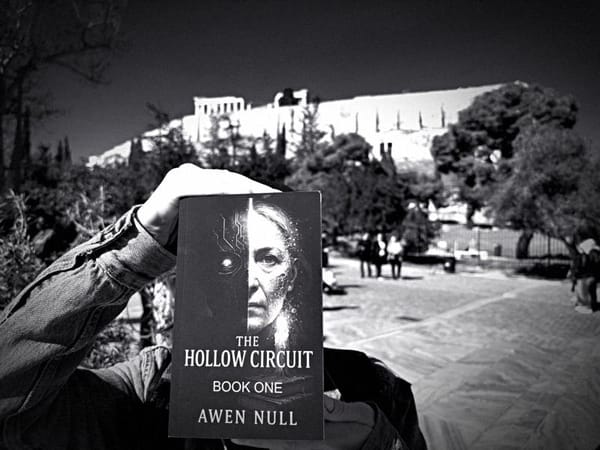

Art of FACELESS: Position

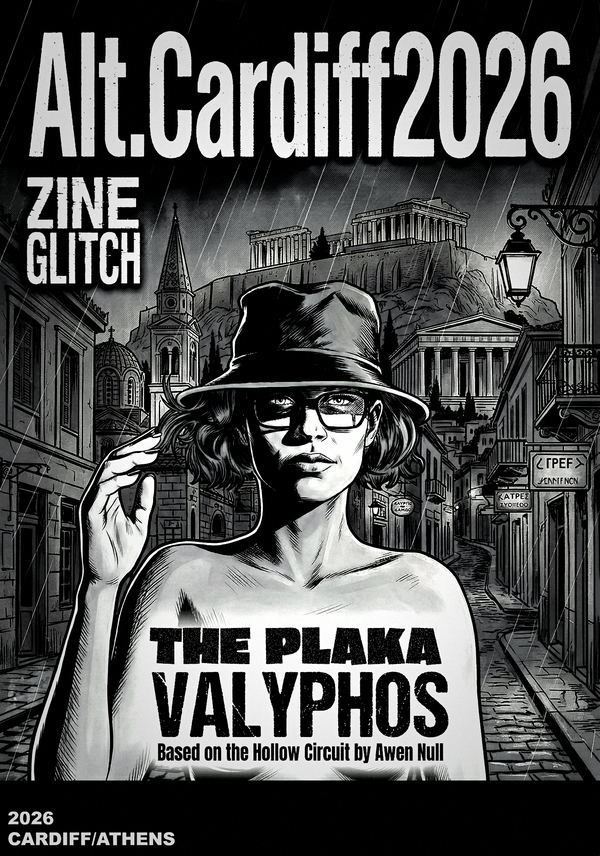

Art of FACELESS has operated since 2010 under a documented faceless protocol — a structural resistance to biometric identification and surveillance that preceded widespread facial recognition deployment by years. The work across fiction, music, photography, visual art, research, and multimedia has been continuously timestamped, trademarked, published, and archived throughout that period.

The Hollow Circuit™, The Veylon Protocol™, Hyperstition Architecture™, and Cognitive Colonisation™ are registered UK trademarks. The prior art trail is extensive, cross-referential, and predates the generative AI tools these detectors were built to identify.

We are not making a defensive claim about our own work. We are making an evidentiary observation about a broken instrument.

We support the regulation of high-risk AI deployment under the EU AI Act and similar frameworks, specifically including the use of AI classification tools in academic assessment and professional accreditation, which constitute consequential decision-making under any reasonable reading of the Act. We have made formal submissions to the EU AI Office's Article 50 Code of Practice consultation and will continue to document, publish, and challenge misclassification wherever it occurs.

For Institutions Currently Deploying These Tools

If you are an academic institution, publisher, commissioning body, or platform using AI detection tools as part of assessment or gatekeeping:

You are deploying an unvalidated instrument in a high-stakes context. The tool's own disclaimer disavows the confidence its headline figure asserts. You carry liability for every false positive. The individuals most likely to be falsely flagged are your most capable, most experienced, most prolific contributors.

The appropriate response is not better tools. It is human judgment, contextual assessment, and evidentiary standards that do not outsource consequential decisions to a commercial classifier.

Art of FACELESS is an independent multimedia research collective founded in Cardiff, Wales, in 2010. artoffaceless.com | artoffaceless.org

This statement may be reproduced freely with attribution.

Disclaimer: This article is published as an opinion and research statement by Art of FACELESS. All factual claims are drawn from publicly available reporting. The views expressed represent the position of Art of FACELESS as an independent research collective and do not constitute legal, financial, or political advice. While every effort has been made to ensure accuracy at the time of publication, the situation described is ongoing and rapidly developing; details may have changed since this piece was written. Art of FACELESS has no commercial relationship with any AI company.